The Trust Gap in AI Email Support

Customers don't dislike AI in support — they dislike feeling deceived by it. Survey after survey shows that customers are comfortable with AI handling their support emails when three conditions are met: they're told AI is involved, they get fast and accurate answers, and they have a clear path to a human when needed. When any of these break down, satisfaction collapses fast — often more sharply than for human-handled tickets that simply went poorly.

This playbook covers the transparency practices that actually build trust, drawn from production deployments handling millions of AI-resolved emails per month.

Principle 1: Disclose AI Involvement

The single most important transparency practice is telling customers when AI is involved. Not buried in a privacy policy footer — in the response itself.

Effective disclosure language:

- Soft disclosure: “This response was prepared with AI assistance. If you need further help, just reply.”

- Direct disclosure: “Hi [Name], I'm Robin, the AI assistant for [Company] support. I've looked into your question and here's what I found.”

- Mixed-mode disclosure: “Our AI assistant helped draft this response, and a member of our team reviewed it before sending.”

Test which framing performs best with your audience. Some segments prefer transparent AI personas (a named AI assistant); others prefer the human company voice with a small AI disclosure line. Both work; what doesn't work is hiding the AI involvement entirely.

Principle 2: Make Human Escalation One Click Away

Every AI-handled email should include an obvious path to human help. The customer's confidence in the AI grows when they know they can easily exit it.

Effective escalation patterns:

- “Reply with AGENT”: Simple keyword that immediately routes to a human

- Direct human contact: Include the support team's email or phone in every AI response

- One-click escalation link: A link that creates a human-routed ticket with the conversation history

- “Was this helpful?” with no → immediate human escalation

The presence of an easy human option, paradoxically, makes customers more willing to engage with AI — because they're not trapped in it.

Principle 3: Communicate Confidence and Limitations

When the AI isn't certain, say so. Calibrated confidence is far more trustworthy than false certainty.

Examples:

- “Based on your account, your subscription renews on March 15. Let me know if this doesn't match what you were expecting.”

- “I found one possible match for your order — #12345 from January 10. If this isn't the right one, please share the order number and I'll look again.”

- “This question requires more context than I have. I've passed it to our team, who will respond within [SLA].”

Hedging language that's grounded in actual uncertainty is honest. Hedging language used to make wrong answers sound less wrong is corrosive.

Principle 4: Be Honest When the AI Made a Mistake

AI will occasionally get things wrong. The recovery is what determines whether the relationship survives.

Effective recovery protocols:

- When a customer corrects an AI response, the follow-up should acknowledge the error directly: “You're right — I had that wrong. The correct answer is...”

- For consequential errors (incorrect refund amount, wrong policy quoted), immediately route to a human for follow-up

- Track AI errors systematically and share aggregate patterns with the team — transparency internally drives improvement

What destroys trust: doubling down on a wrong answer, refusing to acknowledge a mistake, or routing the customer through additional AI loops after they've already been frustrated.

Principle 5: Show Your Work

For complex queries, transparency about how the AI reached its answer increases trust:

- Cite the specific policy or knowledge base article

- Show the data the response is based on (“According to your order #12345 placed on...”)

- Reference the specific terms of the customer's plan or contract

- For numerical answers, show the calculation

This isn't about overwhelming the customer with detail — it's about giving them the building blocks to verify the answer themselves if they want to.

Principle 6: Set Expectations on AI Capabilities

If your AI doesn't handle certain ticket types, say so upfront on your contact page:

- “Our AI assistant handles most order, account, and policy questions instantly. For complex billing disputes, technical issues, or sensitive matters, our human team responds within [SLA].”

- Channel customers to the right starting point based on their query type

- Don't promise AI capabilities you don't have

Principle 7: Respect Customer Preferences

Some customers will explicitly prefer human interaction. Honour this preference:

- If a customer asks to speak to a human, route immediately — don't loop them through more AI

- Track customer preferences over time — some accounts should default to human handling

- For high-value customers, default human handling may be the right policy regardless of query type

Principle 8: Privacy Transparency

Be clear about what you do with customer data:

- What data the AI processes

- How long it's retained

- Whether it's used to improve the AI

- How the customer can request deletion

Privacy policies that bury AI processing details lose trust when customers eventually find them. Lead with the AI processing disclosure during onboarding.

Measuring Trust

- CSAT for AI-handled vs human-handled tickets: Should converge over time as AI quality improves

- Reopen rate: Lower reopen rates on AI tickets indicate genuine resolution

- Human escalation rate: Should stabilise after first month — too high indicates AI scope is wrong, too low may indicate customers feel trapped

- Customer feedback themes: Monitor for “I felt like I was talking to a robot” or “The AI didn't understand me” signals

Bottom Line

Trust in AI email support isn't a marketing problem — it's an operational discipline. Transparent disclosure, easy human escalation, calibrated confidence, honest error recovery, and respect for customer preferences are not separate from operational quality. They are operational quality. The teams that get this right see CSAT improve after deploying AI, not in spite of it.

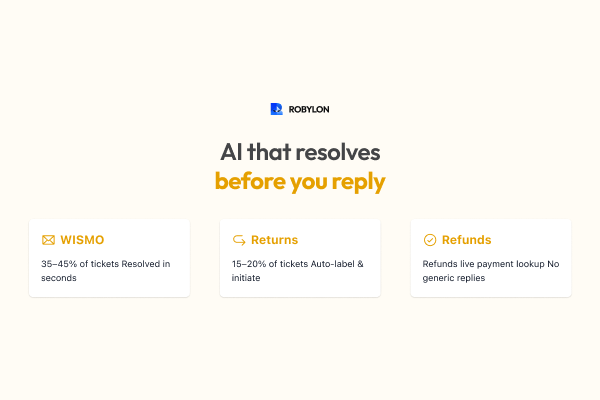

Robylon AI is built for transparent customer experiences — with built-in disclosure, easy escalation, and calibrated confidence framing. Start free at robylon.ai

FAQs

What metrics measure trust in AI email support?

Track CSAT for AI vs human-handled tickets, reopen rates, escalation rates, and customer feedback themes. CSAT should converge over time. High escalation rates indicate wrong AI scope. Watch for “I felt like I was talking to a robot” signals in qualitative feedback.

How should AI handle its own mistakes?

For consequential errors, immediately route to a human for follow-up. Acknowledge mistakes directly (“You're right — I had that wrong”) and track patterns systematically. What destroys trust: doubling down on wrong answers, refusing to acknowledge mistakes, or routing through more AI loops after frustration.

What human escalation patterns work best?

Use a “Reply with AGENT” keyword, direct human contact details in every response, one-click escalation links, or “Was this helpful?” with a no-option that immediately escalates. The presence of an easy human option paradoxically makes customers more willing to engage with AI.

How should AI involvement be disclosed in email responses?

Three patterns work: soft disclosure (“This response was prepared with AI assistance”), direct disclosure (“I'm Robin, the AI assistant”), and mixed-mode disclosure (“Our AI helped draft this and a team member reviewed it”). Test which framing performs best with your audience.

What conditions make customers comfortable with AI support?

Customers are comfortable with AI handling support emails when three conditions are met: they're told AI is involved, they get fast and accurate answers, and they have a clear path to a human when needed. Break any one and satisfaction collapses sharply.

.png)

.png)

.webp)

.webp)

.webp)