You have decided to bring AI into your customer support operation. The natural next question: which channel should you automate first?

Most companies default to chat. It is the channel with the most visible AI products (chatbots have been mainstream for years), the easiest demos (watch the bot respond in real time), and the strongest marketing narratives. Chat is where the attention goes.

But attention and ROI are different things. When you compare the three primary support channels — email, chat, and voice — across the dimensions that actually determine return on investment, a different picture emerges. Email is not the flashiest channel to automate. It is the most valuable.

This is not opinion — it is math. Here is the analysis.

The 7 Dimensions That Determine AI Support ROI

1. Cost Per Ticket (Before AI)

The starting cost per ticket determines how much savings automation can unlock. Higher starting costs mean more room for ROI.

- Email: $5–$15 per ticket. Agents spend 8–15 minutes per email — reading the full message, researching the issue, drafting a complete response, often across multiple reply cycles. Email has the highest per-ticket cost because of the time intensity.

- Chat: $3–$8 per conversation. Chat interactions are shorter (5–10 minutes) and agents can handle 2–3 chats simultaneously, which distributes cost.

- Voice: $6–$20 per call. Phone calls are expensive because agents handle one call at a time, calls average 6–12 minutes, and telephony infrastructure adds overhead.

Winner: Voice has the highest per-ticket cost, email is close behind. Chat is cheapest. But cost per ticket is only half the equation — you also need to consider how much of the volume AI can actually automate.

2. Achievable Automation Rate

The percentage of interactions AI can fully resolve without human involvement directly determines the magnitude of savings.

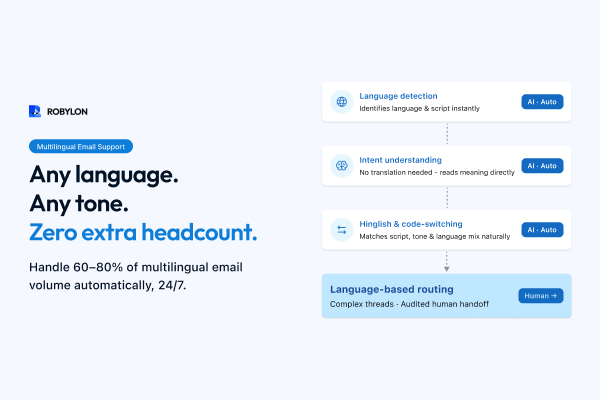

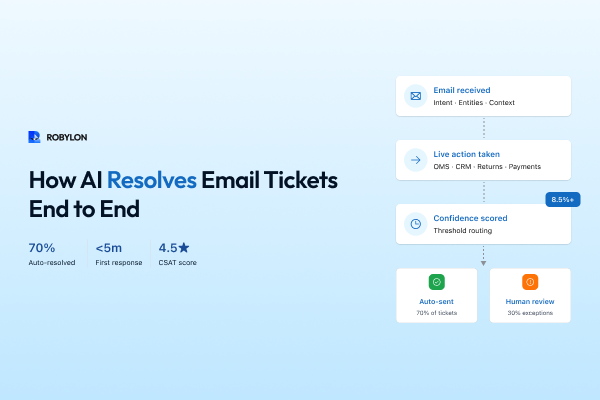

- Email: 60–80% auto-resolution. Email queries are longer, contain more context, and follow more predictable patterns. The AI has the full message to work with — no need for back-and-forth clarification. Multi-turn resolution (refund process, return initiation) works well because email is naturally asynchronous.

- Chat: 40–65% auto-resolution. Chat messages are shorter and more ambiguous. Customers type fragments: "order status," "refund?", "doesn't work." The AI often needs to ask clarifying questions, which adds turns and lowers the resolution rate. Customers also have lower patience for chat bots — they expect a human behind the widget.

- Voice: 30–50% auto-resolution. Voice AI has made remarkable progress, but understanding spoken language (accents, background noise, emotional tone) is harder than parsing text. Complex voice interactions still feel robotic. And many customers calling support have already tried self-service and are specifically seeking a human — making them less receptive to AI.

Winner: Email, by a significant margin. The combination of rich context, asynchronous nature, and text-based processing gives email AI the highest achievable automation rate.

3. Speed to Deploy

How quickly you can go from "we decided to use AI" to "AI is handling real customer interactions" directly affects time-to-value.

- Email: 1–4 weeks. Connect your helpdesk, upload your knowledge base, configure confidence thresholds, run shadow mode for a week, go live. No front-end widget to design, no real-time latency to optimize, no telephony infrastructure to set up.

- Chat: 1–3 weeks. Similar to email, but requires widget design and placement, real-time response optimization (customers notice latency), and handling of concurrent conversations. Slightly faster to demo but similar production timeline.

- Voice: 4–12 weeks. Voice AI requires telephony integration (Twilio, Exotel, or similar), speech-to-text calibration, text-to-speech voice selection and tuning, latency optimization (customers are extremely sensitive to pauses in phone conversations), and extensive testing for accent and noise handling. Significantly slower to deploy than text channels.

Winner: Email and chat are tied for fastest deployment. Voice takes 3–5x longer.

4. Risk of Failure

What happens when the AI gets it wrong? The consequences vary dramatically by channel.

- Email: Low risk. If the AI sends an incorrect response, the customer replies to correct it, and a human agent takes over. The interaction is asynchronous — there is no live audience watching the AI fail in real time. You can also use confidence gating to send only high-confidence responses automatically and queue everything else for human review.

- Chat: Medium risk. Failures happen in real time — the customer sees the wrong answer instantly and their frustration builds within the conversation. However, handoff to a human agent is relatively seamless if the platform supports it.

- Voice: High risk. A voice AI that misunderstands a spoken question, responds with the wrong information, or sounds robotic creates an immediately negative experience. There is no "undo" — the customer heard it. And customers who call support (rather than emailing or chatting) tend to have lower tolerance for AI, making failures more damaging to CSAT.

Winner: Email is the safest channel to automate. Failures are contained, recoverable, and often invisible to the customer (if confidence gating is used).

5. Volume and Scalability

The channel with the most volume offers the biggest absolute savings when automated.

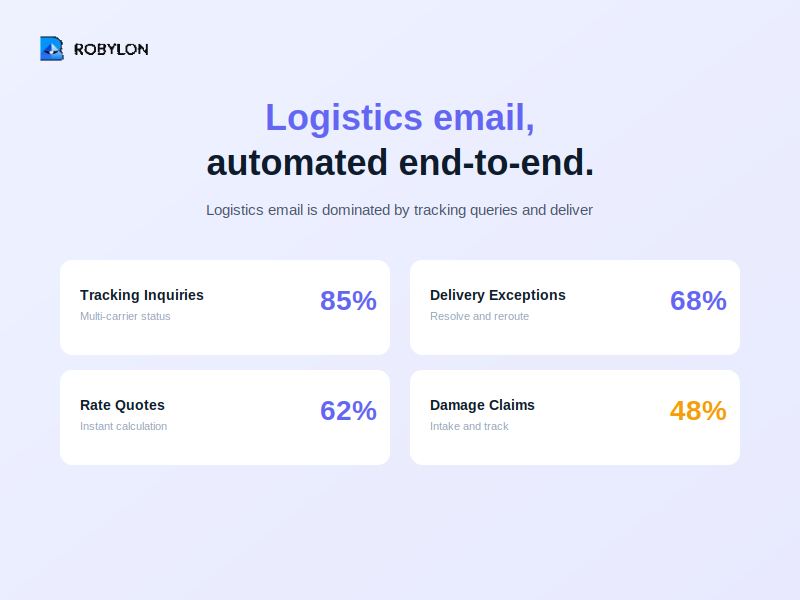

- Email: Typically 40–60% of total support volume for most businesses. Email remains the single largest channel despite the growth of chat and messaging.

- Chat: 20–35% of volume. Growing, but still smaller than email for most B2B and mid-market companies. Higher share for D2C and e-commerce.

- Voice: 10–30% of volume. Declining as a share of total support but remains significant for industries like finance, healthcare, telecom, and travel.

Winner: Email has the highest volume in most businesses, which means the highest absolute savings when automated.

6. Customer Tolerance for AI

How receptive are customers to AI responses on each channel?

- Email: High tolerance. Customers are accustomed to automated emails (order confirmations, shipping notifications, marketing). An AI support response that is accurate, personalized, and well-formatted is indistinguishable from a human response for most customers. In fact, many prefer the speed — getting a correct answer in 5 minutes rather than waiting 4 hours for a human to reply.

- Chat: Moderate tolerance. Customers increasingly accept chatbots on websites, but expectations vary. Many still mentally associate the chat widget with "there's a person behind this." When they realize it is a bot, some disengage. Transparency ("I'm an AI assistant") is important here.

- Voice: Lower tolerance. Customers calling support usually want a human. IVR fatigue from decades of "press 1 for billing, press 2 for support" has made many customers hostile to automated phone systems. Voice AI must be exceptionally good — natural-sounding, low-latency, context-aware — to be accepted. And even then, a meaningful percentage of callers immediately say "agent" or "representative."

Winner: Email customers are the most receptive to AI, which directly translates to higher satisfaction scores for AI-resolved interactions.

7. Learning and Improvement Potential

How quickly does the AI improve on each channel?

- Email: Highest learning potential. Email generates a steady, high-volume stream of text data. Every resolved email trains the AI on new phrasings, edge cases, and resolution patterns. The data is clean (no speech-to-text errors) and rich (emails contain more context than chat messages). The improvement cycle is continuous and measurable.

- Chat: Good learning potential, but data is noisier. Chat messages are shorter, more ambiguous, and often require multi-turn exchanges to surface the real question. The AI learns from these interactions but needs more data points to reach the same level of understanding as email.

- Voice: Lowest learning potential. Voice data must be transcribed (speech-to-text) before the AI can learn from it, introducing errors. Accents, background noise, and emotional speech reduce transcription quality. The learning cycle is slower and less reliable than text-based channels.

Winner: Email provides the cleanest, richest data for AI improvement.

The Scorecard

Summarizing across all seven dimensions (scored 1–3, where 3 is best):

- Email: Cost savings potential (2) + Automation rate (3) + Deployment speed (3) + Low risk (3) + Volume (3) + Customer tolerance (3) + Learning potential (3) = 20/21

- Chat: Cost savings potential (1) + Automation rate (2) + Deployment speed (3) + Low risk (2) + Volume (2) + Customer tolerance (2) + Learning potential (2) = 14/21

- Voice: Cost savings potential (3) + Automation rate (1) + Deployment speed (1) + Low risk (1) + Volume (1) + Customer tolerance (1) + Learning potential (1) = 9/21

Email wins by a wide margin — not because it is more exciting than chat or voice, but because the fundamentals (high volume, high automation rate, low risk, fast deployment, high tolerance) compound into superior ROI.

The Right Sequencing Strategy

This analysis does not mean you should only automate email. It means you should automate email first — and use the learnings, confidence, and cost savings to fund and accelerate chat and voice automation afterward.

Phase 1: Email (Weeks 1–6)

Deploy AI email resolution. Target 60–80% auto-resolution. The cost savings from email automation are immediate and significant — typically enough to fund the next phase without additional budget approval.

Key benefit: The knowledge base, escalation rules, and AI tuning you build for email directly transfer to chat. You are not starting from zero when you expand.

Phase 2: Chat (Weeks 4–10, overlapping with email)

Deploy the same AI agent on your website chat widget. The knowledge base is already built and validated from email. Chat-specific adjustments include shorter response format, real-time latency optimization, and proactive engagement triggers. The AI is already trained on customer questions from email — it recognizes the same intents in chat immediately.

Phase 3: Voice (Weeks 8–16)

Deploy AI voice agents for your phone channel. By now, your AI understands your customers deeply — from thousands of emails and chat conversations. Voice-specific work includes STT/TTS configuration, latency optimization, and conversational pacing. But the core intelligence (intent detection, knowledge retrieval, action-taking) transfers directly from the text channels.

Phase 4: Omnichannel Optimization (Ongoing)

With AI deployed across email, chat, and voice, optimize the cross-channel experience. A customer who emails about an issue and later calls about the same issue should encounter an AI that knows the full context. Unified analytics show which channels perform best for which query types, enabling intelligent routing: "For refund requests, email AI resolves 85% — route there. For urgent delivery issues, voice AI resolves 60% and has higher CSAT — offer a callback."

What About WhatsApp and Social?

WhatsApp and social messaging channels (Instagram DMs, Facebook Messenger) behave like a hybrid of email and chat. Messages are text-based (good for AI), asynchronous (like email — no real-time pressure), and high-volume in certain markets (India, Latin America, MENA).

For businesses where WhatsApp is a primary customer channel, automate it alongside or immediately after email. The AI handles WhatsApp messages the same way it handles email — parsing text, detecting intent, retrieving knowledge, taking actions. The knowledge base is shared. The deployment effort is minimal once email AI is running.

Why This Matters for Budget Conversations

When presenting AI automation to leadership, the "email first" strategy is far easier to fund than "let's build a voice bot." Here is why:

- Lower risk: Email failures are invisible to customers (confidence gating). Voice failures are audible and immediate. Leadership funds low-risk initiatives more readily.

- Faster proof: Email AI delivers measurable results in 2–4 weeks. Voice AI takes 2–3 months. A shorter proof cycle builds internal confidence faster.

- Clear ROI math: "We handle 5,000 email tickets/month at $8 each. AI resolves 70% at $1.50 each. Savings: $22,000/month." This math is straightforward and hard to argue with.

- Self-funding expansion: The savings from email automation fund chat and voice deployment — no additional budget request needed for subsequent phases.

Bottom Line

Email is not the most exciting channel to automate — but it is the most valuable. It has the highest volume, the highest achievable automation rate, the fastest deployment time, the lowest risk, the highest customer tolerance, and the richest learning data. Starting with email and expanding to chat and voice is not just a good strategy — it is the strategy that delivers the highest cumulative ROI in the shortest time.

The companies that get this right do not automate one channel in isolation. They build an AI-first support operation that starts with email, expands to chat and messaging, and eventually handles voice — all from a shared knowledge base and a unified AI engine.

Start where the ROI is highest. Robylon AI resolves email tickets end-to-end — then extends the same AI to chat, voice, and WhatsApp from one platform. Email first. Everything else follows. Start free at robylon.ai

FAQs

What is the recommended sequencing for automating email, chat, and voice?

Phase 1 (Weeks 1–6): Deploy AI email resolution — target 60–80% auto-resolution. Build and validate your knowledge base, system integrations, and escalation rules. Phase 2 (Weeks 4–10, overlapping): Extend the same AI to web chat — the KB and integrations are already built. Adjust for shorter, real-time responses. Phase 3 (Weeks 8–16): Deploy voice AI — by now the AI deeply understands your customers. Voice-specific work is STT/TTS and latency tuning. Phase 4 (Ongoing): Omnichannel optimization — unified context across all channels.

Why is email lower risk to automate than chat or voice?

Email failures are asynchronous and recoverable. If the AI sends an incorrect response, the customer replies to correct it and a human takes over — there is no live audience watching the AI fail. With confidence gating, low-confidence emails are not sent at all — they are queued for human review. Chat failures happen in real time (customer sees the wrong answer instantly). Voice failures are the worst — the customer hears the wrong information and there is no "undo." This risk profile makes email the safest channel to start automating.

How does automating email first help automate chat and voice later?

Everything built for email transfers directly: the knowledge base, escalation rules, intent taxonomy, system integrations (OMS, CRM, billing), and AI tuning — all carry over when you expand to chat and voice. The AI has already been trained on thousands of customer questions from email, so it recognizes the same intents immediately on chat. Additionally, the cost savings from email automation (typically $150K–$250K/year for mid-size teams) directly fund chat and voice deployment — no additional budget request needed.

What is the achievable automation rate for email vs chat vs voice?

Email: 60–80% auto-resolution. Emails contain rich context, follow predictable patterns, and the asynchronous nature gives AI time to process accurately. Chat: 40–65%. Messages are shorter and more ambiguous, requiring more clarification turns. Voice: 30–50%. Speech understanding (accents, noise, emotion) is harder than text parsing, and callers have lower patience for AI. The gap between email and voice automation rates is typically 30–40 percentage points.

Which support channel should I automate with AI first?

Email. It scores highest across all 7 ROI dimensions: highest volume (40–60% of total tickets), highest achievable automation rate (60–80%), fastest deployment (1–4 weeks), lowest risk (failures are asynchronous and recoverable), highest customer tolerance for AI, and richest learning data. Start with email, use the savings and learnings to fund chat and voice automation afterward.

.png)

.png)

.webp)