Quality assurance in email support has a fundamental scaling problem. A QA analyst can review 40–60 email responses per day. If your team handles 5,000 emails per month, that analyst reviews 800–1,200 — roughly 16–24% if they do nothing else. Most QA programs actually review 5–10% because the analyst also coaches agents, writes guidelines, and attends calibration sessions.

This means 90–95% of your email responses go out unreviewed. Quality issues — wrong information, missed questions, poor tone, compliance violations — are discovered only when a customer complains or when a sample happens to catch the error days later. By then, the damage is done.

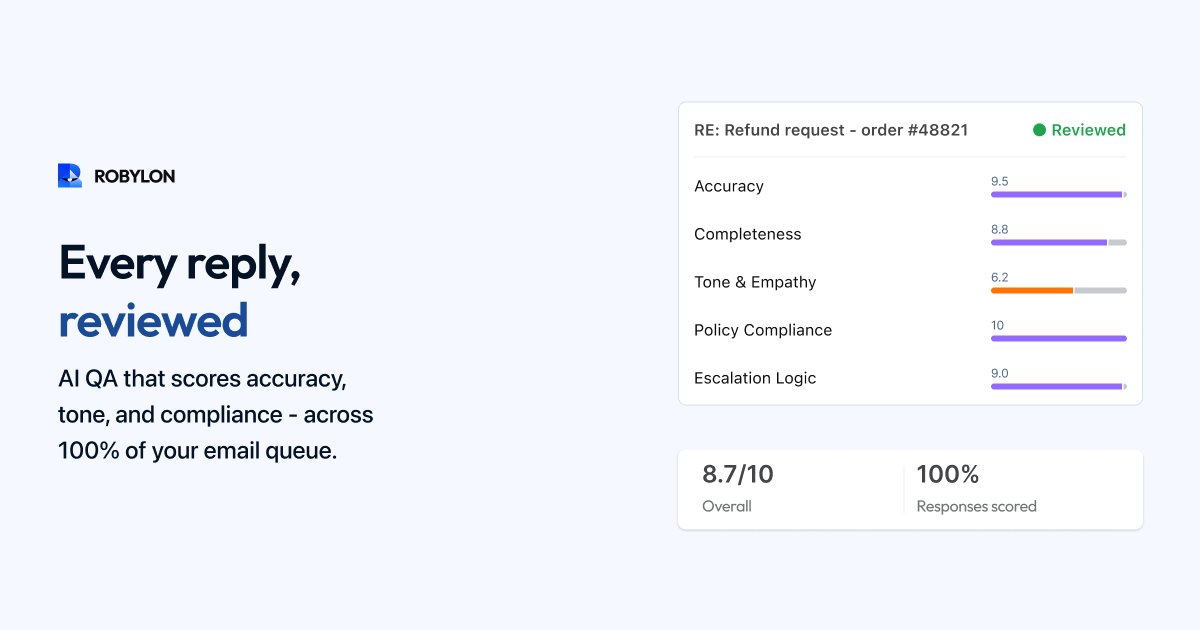

AI changes this equation entirely. AI-powered QA scores 100% of email responses — both human-written and AI-generated — in real-time, across multiple quality dimensions. No sampling. No delays. Every email, every time.

The Five QA Dimensions for Email

1. Accuracy

Does the response contain correct information? AI QA checks every factual claim in the response against the knowledge base and connected systems. If the agent says "Your refund will arrive in 3–5 business days" but the payment system shows the refund has not been initiated, the AI flags the discrepancy. If the response quotes a return window of 30 days but the current policy is 14 days, the AI catches it.

Accuracy scoring requires the AI to have access to the same knowledge base and systems the support team uses. This is why QA works best when integrated with an AI email platform — the QA engine and the resolution engine share the same data layer.

2. Completeness

Did the response address every question the customer asked? Email customers frequently include multiple questions in one message. "Can you check my order status, update my shipping address, and tell me if I can add an item to the order?" contains three distinct requests. AI QA parses the original email for all intents and verifies that the response addresses each one. Missed questions are flagged with specific highlighting — "Customer asked about adding an item to the order, but the response did not address this."

3. Tone and Brand Voice

Does the response match your brand's communication style? AI QA evaluates responses against your defined tone parameters: formality level (casual, professional, formal), empathy signals (acknowledgment of frustration, apology where appropriate), brand-specific language (words to use and avoid), and length appropriateness (not too terse, not excessively wordy). A fintech company might flag overly casual language. A D2C brand might flag responses that sound too corporate.

4. Compliance

Does the response follow regulatory and policy rules? This is critical for fintech (no financial advice, required disclaimers), healthcare (HIPAA considerations), and any industry with specific communication requirements. AI QA checks for prohibited language (guarantees, promises about outcomes), required disclaimers (regulatory notices, terms references), PII handling (is the response accidentally exposing sensitive data?), and policy adherence (are agents following current refund, return, and escalation policies?).

5. Resolution Quality

Will this response actually resolve the customer's issue, or will it generate a follow-up? AI QA evaluates whether the response is specific enough (not a generic template that does not address the actual question), actionable (the customer knows what to do next), and complete (no loose ends that will trigger another email). A response that says "Please check your order status on our website" when the customer specifically asked for their tracking number would score low on resolution quality.

How AI QA Works in Practice

For AI-Generated Responses

Before an AI-generated email is sent to the customer, the QA layer reviews it. If the response scores above the quality threshold on all five dimensions, it sends automatically. If it scores below threshold on any dimension, it is flagged for human review with specific annotations — "Accuracy concern: the refund timeline stated does not match the payment system data" or "Completeness gap: the customer's question about loyalty points was not addressed."

This creates a quality gate that catches errors before they reach customers — not days later in a sampling review.

For Human-Written Responses

After an agent drafts a response but before it sends, AI QA can provide real-time feedback: "This response does not address the customer's second question about billing" or "The tone in paragraph 2 may come across as dismissive — consider acknowledging the customer's frustration first." The agent can adjust before sending, turning QA from a retrospective audit into a real-time coaching tool.

For teams that prefer not to add a review step to the agent workflow, AI QA can run asynchronously — scoring every sent response and surfacing issues to the QA team for follow-up. The QA team still reviews only the flagged responses (typically 10–20% of human-written emails), but they are reviewing the ones most likely to have issues rather than a random sample.

QA Scoring Framework

A practical scoring system: each of the five dimensions scores 1–5, producing a total quality score of 5–25. Thresholds: 22–25 is excellent (no action needed), 18–21 is good (minor coaching opportunity), 14–17 is needs improvement (targeted coaching), and below 14 is critical (immediate intervention). Set your auto-send threshold for AI responses at 20+ (good to excellent on all dimensions).

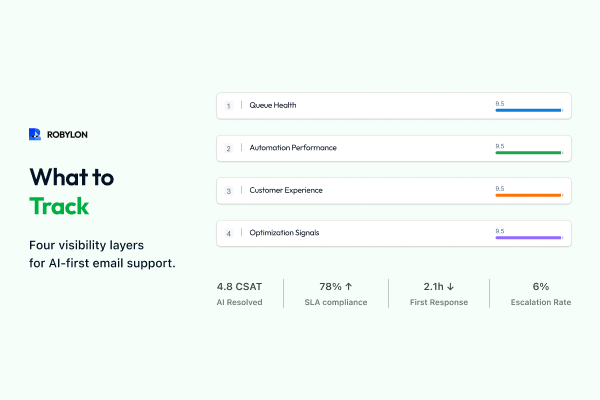

Aggregate these scores by agent, by email category, by time period, and by AI vs human. The patterns reveal where to invest coaching, which categories need better knowledge base content, and whether AI quality is trending up or down.

The QA Feedback Loop

AI QA produces a continuous stream of quality signals that feeds back into three improvement cycles. First, agent coaching: weekly reports show each agent's scores by dimension, with specific examples of high and low-scoring responses. Agents improve faster when feedback is specific, timely, and data-driven rather than based on a random sample reviewed days later. Second, knowledge base optimization: accuracy errors often trace back to outdated or incomplete KB articles. QA data identifies which articles are causing errors and prioritizes updates. Third, AI model improvement: low-scoring AI responses become training data for improving the model — the system learns from its mistakes and the quality scores trend upward over time.

Compliance-Specific QA for Regulated Industries

Fintech, healthcare, and insurance companies need QA that goes beyond general quality. AI compliance QA checks every outgoing email for: required disclaimers present (risk warnings, terms references), prohibited language absent (no guarantees of returns, no medical diagnoses), PII properly handled (account numbers masked, SSN never included), escalation triggers respected (regulatory complaints routed to compliance team, not resolved by AI), and audit trail maintained (every response linked to the knowledge source and decision logic that produced it).

This automated compliance checking is often more reliable than human review — because the AI does not get fatigued, does not skip steps on busy days, and applies rules consistently across 100% of emails rather than a 5% sample.

Bottom Line

Manual QA that reviews 5–10% of emails days after they are sent is better than no QA — but it is not good enough for 2026. AI-powered QA reviews 100% of responses in real-time, catches errors before they reach customers, provides continuous coaching data for agents, and maintains compliance across every interaction. The combination of AI email resolution and AI QA creates a closed loop: the AI resolves emails, QA scores them, errors feed back into improvement, and quality trends upward continuously.

Score every email, not just 5%. Robylon AI includes built-in QA scoring across accuracy, tone, compliance, and completeness — for both AI-generated and human-written responses. Start free at robylon.ai

FAQs

How does AI QA create a feedback loop for continuous improvement?

Three improvement cycles: 1) Agent coaching: weekly reports show each agent's scores by dimension with specific examples — feedback is timely and data-driven, not based on a random sample reviewed days later. 2) KB optimization: accuracy errors trace back to outdated or incomplete articles, prioritizing which content to fix. 3) AI model improvement: low-scoring AI responses become training data — the system learns from its mistakes and quality trends upward. The result is a compounding quality improvement that makes every week better than the last.

What are the five QA dimensions for AI email support?

1) Accuracy: Is every factual claim correct? Verified against KB and live systems. 2) Completeness: Does the response address every question in the email? 3) Tone and brand voice: Does it match formality, empathy, and language standards? 4) Compliance: Are required disclaimers present and prohibited language absent? 5) Resolution quality: Will this response actually close the issue, or will it generate a follow-up? Each dimension scores 1–5, producing a total quality score of 5–25.

How does AI QA handle compliance in regulated industries?

AI compliance QA checks every outgoing email for: required disclaimers present (risk warnings, terms references), prohibited language absent (no financial guarantees, no medical diagnoses), PII handling (account numbers masked, SSNs never included), escalation triggers respected (regulatory complaints routed to compliance team), and audit trail maintained (every response linked to its source KB article and decision logic). This automated checking is more consistent than human review because the AI applies rules uniformly across 100% of emails without fatigue.

What QA scoring threshold should I set for auto-sending AI emails?

Using a 5–25 scale (5 dimensions × 1–5 each): 22–25 (auto-send): excellent on all dimensions. 18–21 (auto-send with logging): good, minor coaching opportunity. 14–17 (route to human review): needs improvement on one or more dimensions. Below 14 (block and escalate): critical issues detected. Set your auto-send threshold at 20+ to start, then adjust based on observed accuracy and CSAT data.

How does AI QA review 100% of email responses?

AI QA runs automatically on every outgoing email — both AI-generated and human-written. For AI responses: QA scores the draft before sending; responses above the quality threshold send automatically, while below-threshold responses are flagged for human review with specific annotations. For human responses: QA provides real-time feedback before the agent sends ("This response doesn't address the customer's second question"), or scores asynchronously and surfaces the lowest-quality responses for QA team review. Either way, 100% of responses are scored, not a 5–10% sample.

.png)

.png)